When you start learning AI, the jargon is overwhelming.

Artificial Intelligence. Machine Learning. Deep Learning. Neural Networks. Transformers. Supervised. Unsupervised.

Let’s simplify everything — without skipping the important nuances.

1️⃣ The AI Hierarchy (The Big Picture)

Artificial Intelligence (AI) is the broad field of building systems that perform tasks humans are generally good at:

- Recognizing patterns

- Understanding speech

- Interpreting text

- Making decisions

- Seeing and identifying objects

Inside AI → Machine Learning (ML)

Inside ML → Statistical ML and Deep Learning

They are related — but not identical.

What’s AI but NOT ML?

AI includes systems that don’t “learn” from data.

Examples:

- Regular expressions (pattern matching)

- Rule-based systems

- Parts of robotics

You can build an AI-like system using rules alone.

Machine learning is just one powerful way to build AI.

2️⃣ What Is Machine Learning Really?

Machine learning is about learning patterns from data instead of hardcoding rules.

Traditional Programming

You give:

- Input

- Logic

You get:

- Output

Example:

y = x²

Machine Learning (Training Phase)

You give:

- Input

- Output

The system learns:

- The logic (model)

That learned logic is stored as a model.

Two Phases of ML

1. Training

Input + Output → Learn the equation

2. Inference

New Input → Predict Output

That’s the entire pipeline.

3️⃣ Classification vs Regression

These are the two most common supervised learning problems.

🟢 Classification (Categories)

Examples:

- Spam / Not Spam

- Cat / Dog

- Fraud / Not Fraud

- News: Business / Sports / Tech / Health

Two categories → Binary classification

More than two → Multiclass classification

Output = Discrete label

🔵 Regression (Numbers)

Example: Home price prediction.

Platforms like:

- Zillow

- Magicbricks

- 99acres

They estimate property prices using past data:

- Area

- Bedrooms

- Age

- Location

Output can be:

- 925K

- 921.45K

- 930K

Infinite possibilities → That’s regression.

4️⃣ Supervised vs Unsupervised Learning

Supervised Learning

You have labeled data.

Example:

Spam emails already tagged as spam or not spam.

This requires manual labeling effort.

Unsupervised Learning

No labels.

Toy Bucket Analogy

Imagine a child cleaning toys.

Supervised:

You say:

- Bucket 1 → Dolls

- Bucket 2 → Cars

- Bucket 3 → Trucks

Unsupervised:

You say:

“Just divide into two buckets.”

The child may group:

- By color

- By size

- By type

That’s unsupervised learning.

Real Use Cases

📁 Document Clustering

Automatically grouping:

- Legal documents

- Invoices

- Requirement docs

Algorithms:

- K-Means

- DBSCAN

- Hierarchical clustering

DBSCAN is also used for outlier detection.

5️⃣ Tools for Statistical ML

Core stack:

- Python

- NumPy

- Pandas

- Matplotlib

- Seaborn

- Jupyter Notebook

- Scikit-learn

- XGBoost

Best suited for:

- Structured data (rows and columns)

6️⃣ Why Deep Learning Exists

Statistical ML works well for structured data.

But what about:

- Images?

- Audio?

- Text?

- Video?

These are unstructured data.

Deep learning handles this complexity.

7️⃣ Neural Networks — Explained Intuitively

Imagine training students to detect a koala.

Each student has a specific role:

- One detects eyes

- One detects nose

- One detects ears

- One detects legs

- One detects tail

Each gives a score from 0 to 1.

Higher-level students combine these signals:

- Face detection

- Body detection

Finally, one student makes the decision.

That structure is a neural network.

- First layer → Detect small patterns

- Hidden layers → Detect higher-level features

- Final layer → Output decision

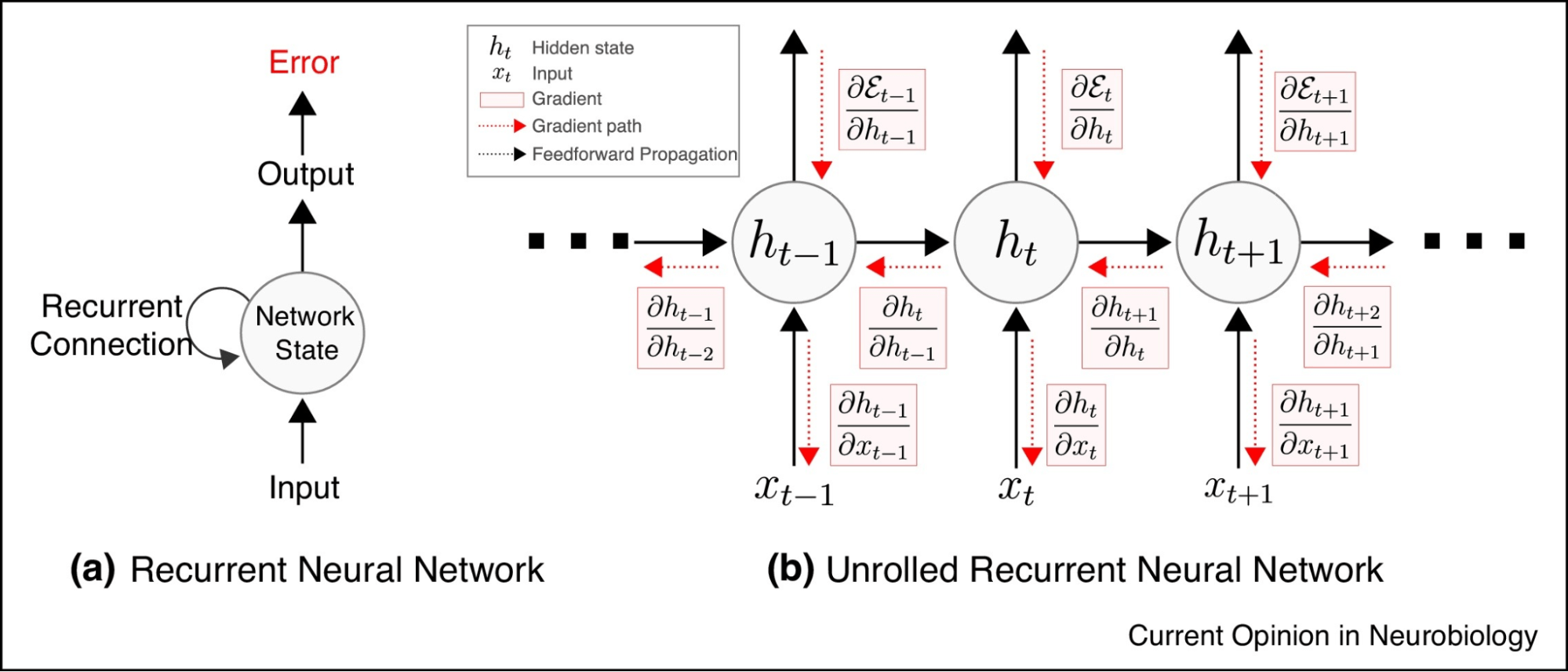

How Training Works (Backpropagation)

Initially:

Everyone guesses randomly.

Supervisor says:

“You’re wrong.”

Error is passed backward.

Each student adjusts their weight (importance).

Repeat this for thousands of images.

Over time:

Accuracy improves.

This is called backpropagation.

It uses:

- Derivatives

- Gradient descent

- Weight updates

8️⃣ Neural Network Architectures

Feedforward Neural Network

Information flows forward only:

Input → Hidden → Output

No loops.

Recurrent Neural Network (RNN)

Used for sequences.

Output feeds back into the system.

Example use cases:

- Text generation

- Time series forecasting

- Speech recognition

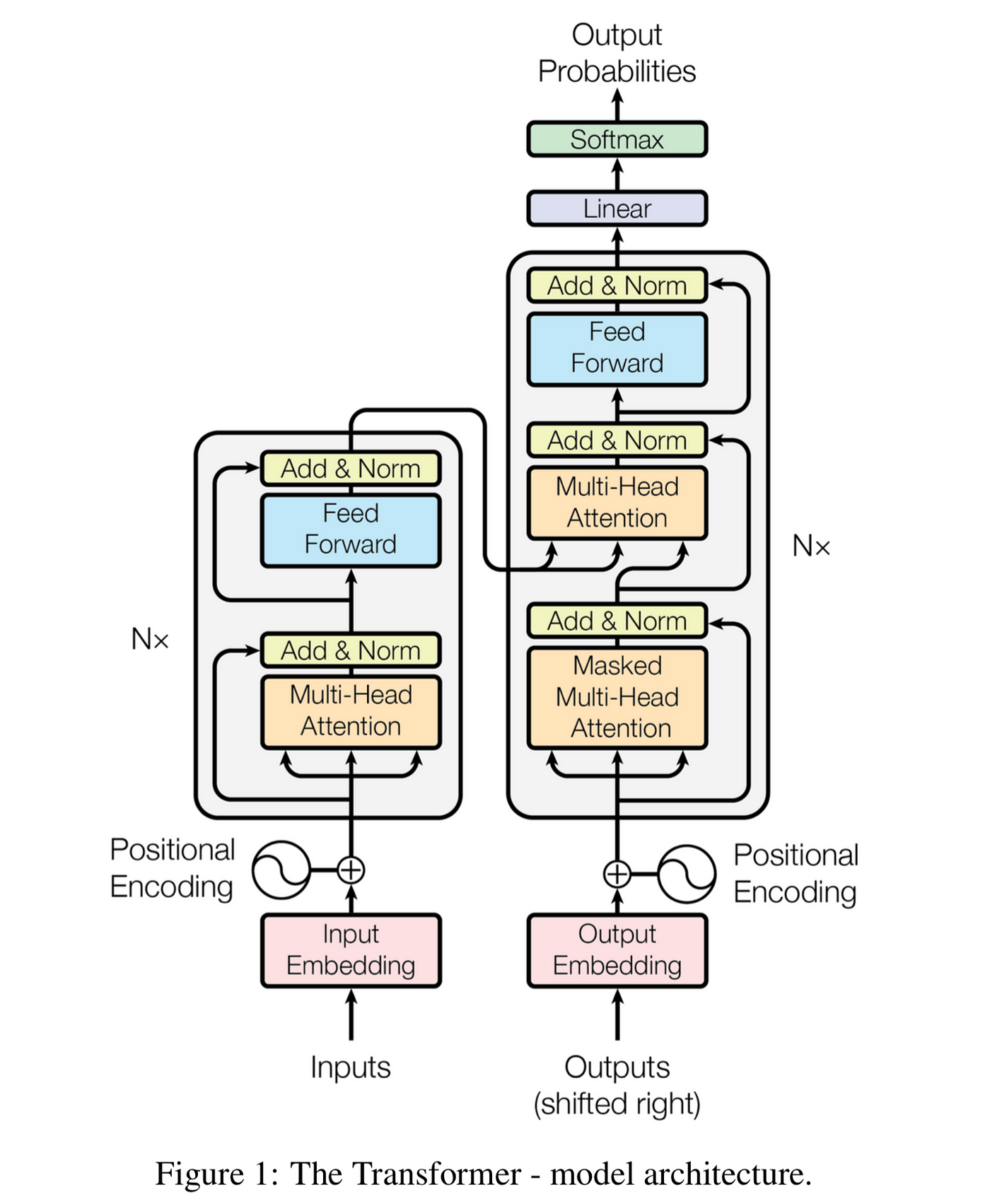

Transformer Architecture

The foundation of modern generative AI.

Models like:

- ChatGPT

- GPT-4

GPT = Generative Pre-trained Transformer

Transformers allow:

- Parallel processing

- Context understanding

- Long-range dependency modeling

They power:

- Generative AI

- Agentic AI

9️⃣ Deep Learning vs Statistical ML

When choosing between them, evaluate:

1. Data Type

Structured → Statistical ML

Unstructured → Deep Learning

2. Data Volume

Small/Medium → ML works well

Massive datasets → Deep Learning performs better

3. Feature Complexity

Simple features → ML

Complex hierarchical patterns → DL

Deep learning can handle structured data too — but experimentation decides.

🔟 Deep Learning Tooling

Frameworks:

- PyTorch

- TensorFlow

PyTorch:

- More intuitive

- Beginner-friendly

- Popular today

TensorFlow:

- Fine control

- Strong production ecosystem

Hardware:

- GPUs (local or cloud)

- Essential for large-scale training

Final Summary

AI → The umbrella

ML → Learns patterns from data

Statistical ML → Structured data

Deep Learning → Neural networks for complex patterns

Supervised → Labeled data

Unsupervised → No labels

Classification → Categories

Regression → Numbers

Transformers → Power modern generative AI

This structured understanding prevents confusion later.

Most people memorize terms.

Few understand the hierarchy.

That difference compounds over time.